In the second edition of the Spring Bootcamp series, we will continue to explore building a web service following REST principles. In the first article we created a few simple GET endpoints, in this article we build out an API that uses the rest of the major HTTP Verbs; POST, PUT, and DELETE.

In this article we will also look at using exceptions to control application flow, why you should use constructors for dependency injection, and also get a better understanding of model view controller application architecture and the benefits of following it.

Speaking Proper REST

I touched on REST briefly in my previous article. Let’s continue exploring REST in this article, by covering some of its key concepts.

Nouns and Verbs

Two key concepts within REST are “nouns” and “verbs”. Within REST nouns refer to the resources that a web service has domain over. Examples of this could be orders, accounts, customers, or in the case of the code example for this article,User.

Verbs within the context of REST refer to HTTP Methods. There are nine HTTP Methods in total, but five that actually relate to acting on a noun these are: GET, POST, PUT, PATCH, and DELETE.

GET – Operation for retrieving a resources

POST – Operation for creating a resource.

PUT – Operation for updating a resource.

DELETE – Operation for deleting a resource.

PATCH – Operation for partially updating a resource.

REST Endpoint Semantics

The “nouns” and “verbs” create very specific semantics around how a REST API should look. The “nouns” form the URL of endpoint(s), with the “verb” being the HTTP method. The API for the User service we will be creating will look like this:

GET: /api/v1/Users: Returns all Users

GET: /api/v1/Users/{id}: Retrieve a specific User

POST: /api/v1/Users: Create a new User

PUT: /api/v1/Users/{id}: Update a User

DELETE: /api/v1/Users/{id}: Delete a User

Following a properly RESTful pattern allows for a discoverable API and consistent experience for clients/users who are familiar with REST.

Note: As covered in the previous article the /api/v1 portion of the endpoint are part of general good API practices, not related to REST.

Safe and Idempotent

When creating a REST API it is also to keep in mind the concepts of; safe and idempotent. Safe means a request will not change the state of the resource. Idempotent means running the same request one or more times will provide the same result. Below is a chart laying out how the five HTTP Methods relate to these two concepts:

These are the expected behaviors when using these HTTP Methods, it is important when implementing a service that is following a RESTful API these expectations are followed. If executing a GET operation leads to a state change for a resource, this will almost certainly result in unexpected behavior for both the owner of the service and the client(s). Similarly a PUT operation that gives different results each time it is executed, will also be problematic.

Safe for the Resource, Not the System

A final key point on this, safe and idempotent relates only to the resource being acted on. State changes can still occur within the service, for example collecting metrics and logging activity about a request. An easy way to conceptualize this is viewing a video on YouTube. YouTube will want to collect metrics about what you are viewing, but you viewing a video shouldn’t change the contents (i.e. the state) of the video itself.

Writing Proper REST

With understanding some of the key REST concepts a bit better, let see what they look like in practice. Above we covered five of the HTTP methods, but, as mentioned in the intro, we will be implementing only four of them; GET, POST, PUT, DELETE, as they map closely to the Create, Read, Update, Delete (CRUD) concepts, which will be covered in more detail in a future article on persisting to a database.

In the first article we used @GetMapping to create GET endpoints. Spring similarly offers @PostMapping, @PutMapping, and @DeleteMapping, for creating the related types of endpoints. Below is the code for a UserController which defines endpoints for retrieving all users, findAll(), looking up a specific user by id findUser(), creating a new user createUser(), update an existing user updateUser(), and deleting a user deleteUser():

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| @RestController | |

| @RequestMapping("/api/v1/users") | |

| public class UserController { | |

| private UserService service; | |

| public UserController(UserService service) { | |

| this.service = service; | |

| } | |

| @GetMapping | |

| public List<User> findAll() { | |

| return service.findAll(); | |

| } | |

| @GetMapping("/{userId}") | |

| public User findUser(@PathVariable long userId) { | |

| return service.findUser(userId); | |

| } | |

| @PostMapping | |

| public ResponseEntity<User> createUser(@RequestBody User user) { | |

| User createdUser = service.createUser(user); | |

| return ResponseEntity.created(URI.create(String.format("/api/v1/users/%d", createdUser.getId()))) | |

| .body(createdUser); | |

| } | |

| @PutMapping("/{userId}") | |

| public User updateUser(@PathVariable long userId, @RequestBody User user) { | |

| return service.updateUser(userId, user); | |

| } | |

| @DeleteMapping("/{userId}") | |

| public ResponseEntity<Void> deleteUser(@PathVariable long userId) { | |

| service.deleteUser(userId); | |

| return ResponseEntity.ok().build(); | |

| } | |

| @ExceptionHandler(ClientException.class) | |

| public ResponseEntity<String> clientError(ClientException e) { | |

| return ResponseEntity.badRequest().body(e.getMessage()); | |

| } | |

| @ExceptionHandler(NotFoundException.class) | |

| public ResponseEntity<String> resourceNotFound(NotFoundException e) { | |

| return ResponseEntity.notFound().build(); | |

| } | |

| } |

Behind the controller is the UserService for handling the actual business logic, limited as it is, for the web service. In this example for the “persistence” I am simply using an ArrayList. Note the usage of exceptions in the service class which I will touch on in more detail.

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| @Service | |

| public class UserService { | |

| private List<User> users = new ArrayList<>(); | |

| private static final Random ID_GENERATOR = new Random(); | |

| public User findUser(long userId) { | |

| for(User user : users) { | |

| if(user.getId().equals(Long.valueOf(userId))) { | |

| return user; | |

| } | |

| } | |

| throw new NotFoundException(); | |

| //throw new ClientException(String.format("User id: %d not found!", user.getId())); | |

| } | |

| public User createUser(User user) { | |

| user.setId(ID_GENERATOR.nextLong()); | |

| users.add(user); | |

| return user; | |

| } | |

| public User updateUser(long userId, User user) { | |

| user.setId(userId); | |

| // User equals looks only at the id field which is why this works despite | |

| // looking weird | |

| if (users.contains(user)) { | |

| users.remove(user); | |

| users.add(user); | |

| return user; | |

| } | |

| throw new ClientException(String.format("User id: %d not found!", user.getId())); | |

| } | |

| public void deleteUser(long userId) { | |

| Optional<User> foundUser = users.stream().filter(u -> u.getId() == userId).findFirst(); | |

| if (foundUser.isPresent()) { | |

| users.remove(foundUser.get()); | |

| return; | |

| } | |

| throw new ClientException(String.format("User id: %d not found!", userId)); | |

| } | |

| public List<User> findAll() { | |

| return users; | |

| } | |

| } |

The code is available on my GitHub repo and you can run it locally to see how it works.

Understanding the Benefits of Model, View, Controller and Separation of Concerns

Model, View, Controller (MVC) is a popular architecture to follow when building a web service, or at least it is in theory. I’ve seen and have built web services where the line between the model, view, and controller has become decidedly blurred. Let’s review the MVC architectural pattern, where developers often go wrong when implementing MVC, and why it matters to follow MVC architecture when building a web service.

MVC Explained

MVC is an architectural pattern of separating a project based on three distinct concerns;

Controller – This is the interface the user/client interacts with to use the service. In the above code this would be represented by the UserController class.

Model – The model is the real “meat” of a service. This is where any business processing, persistence, etc. occurs. This is represented by the UserService class.

View – The view what is the users sees. When building a REST API this is largely handled invisibly by Spring; which by default converts returned messages to JSON.

The wikipedia article on MVC provides a visualization of the above:

Why Good (MVC) Architecture Matters

As the lines between MVC start to blur it can become difficult for a developer to know where to implement new requirements, which sometimes can lead to requirements being accidentally, or even intentionally, implemented in multiple areas. As these issues build up, it can become increasingly difficult to test and maintain an application.

While the User Service we built in this article is very simple, the UserController does represent the level of concern that a controller should contain even in a more complex service. The controller should primarily be concerned with passing values to a service layer and interpreting the return from the service layer to represent back to the user. Inspecting and manipulating the values in a request is a smell that you might be deviating from MVC in a meaningful way.

We will be exploring automated testing in the next article were we will understand the practical benefits of following good architectural practices.

Exceptional Control

Early in my career I was often strongly advised against using exceptions for control flow. Exceptions should be reserved for exceptional conditions; unexpected nulls, failure to connect to a downstream service, incorrect value types, etc.. Errors relating to business reasons should be handled with normal application flows, if/else statements, setting a flag value, and so on.

There are reasons to be cautious when using exceptions to handle application flow, generating a stacktrace, which happens when throwing an exception, is expensive. However using exceptions for control flow can also make code architecturally cleaner.

In UserService, instead of setting a hasError field in User I am throwing an exception when a validation check fails, in this case when a client sends a user id that doesn’t match any existing users. I then make use of Spring’s @ExceptionHandler functionality to generate an appropriate response for the user. As seen in UserController multiple methods can be annotated with @ExceptionHandler each handling a different exception. This allows for a clean way of handling different error responses:

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| @ExceptionHandler(ClientException.class) | |

| public ResponseEntity<String> clientError(ClientException e) { | |

| return ResponseEntity.badRequest().body(e.getMessage()); | |

| } | |

| @ExceptionHandler(NotFoundException.class) | |

| public ResponseEntity<String> resourceNotFound(NotFoundException e) { | |

| return ResponseEntity.notFound().build(); | |

| } |

To Return 404 or 400 When a Resource Doesn’t Exist?

In UserService I implement two ways of handling what is the same problem, a client sending an id for a user that doesn’t exist. Going by proper REST guidelines a 404 should be returned. The potential issue is that a 404 could be ambiguous, was a 404 returned because the desired resource doesn’t exist or because the wrong endpoint is being used?

As mentioned, by REST guidelines the correct choice is clear, 404, but it may not be the correct answer in every use case. The important thing would be to document clearly the expected behavior when looking up a non-existent resource and being consistent across your service(s).

Constructor vs Field Dependency Injection

For a long time many Spring developers had a habit of using field dependency injection. If you were to go into many older Spring projects, including many I wrote myself, you’d see classes that looked something like this:

“`java

public class ClassA{

@Autowired

private ServiceA serviceA;

@Autowired

private ServiceB serviceB;

//the rest of the class

}<span style="color: var(–color-text);">“`

In the above code snippet above, the members serviceA and serviceB are being supplied via field dependency injection. Configuring dependency injection this way is problematic for two major reasons:

- It makes testing more difficult – In order to test the above class you must instantiate the Spring application context, which will slow down test execution and generally increases test complexity.

- It can make it difficult to know a class’ dependencies – Injecting via the constructors creates a kind of contract defining a classes dependencies. Field injection does create such a requirement which can lead to tests breaking in confusing ways or code breaking in difficult to understand reasons when a new field requiring dependency injection is added.

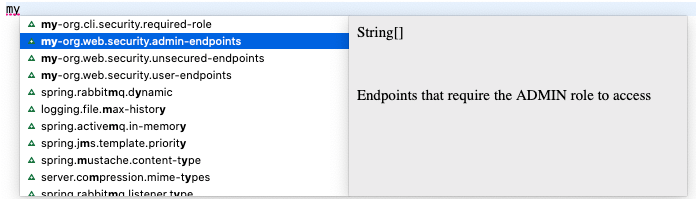

A common critique of Spring is that it’s too “magic”, a lot of this magic related back to a reliance on field injection in Spring’s earlier days. To address this, along with updating documentation and code examples to encourage constructor dependency injection, in Spring Framework 4.3 (Spring Boot 1.5) if a class only has a single constructor, Spring will automatically use that constructor for dependency injection. This removes the need to annotate that constructor with @Autowired. This is the Spring team subtly indicating the preferred way of handling dependency injection.

Conclusion

In the first two articles of this series we learned some good practices for building a RESTful API using Spring Boot. In the next article we will take our first steps into the world of automated testing, one of my favorite subjects!

The code in this article is available on my GitHub repo.